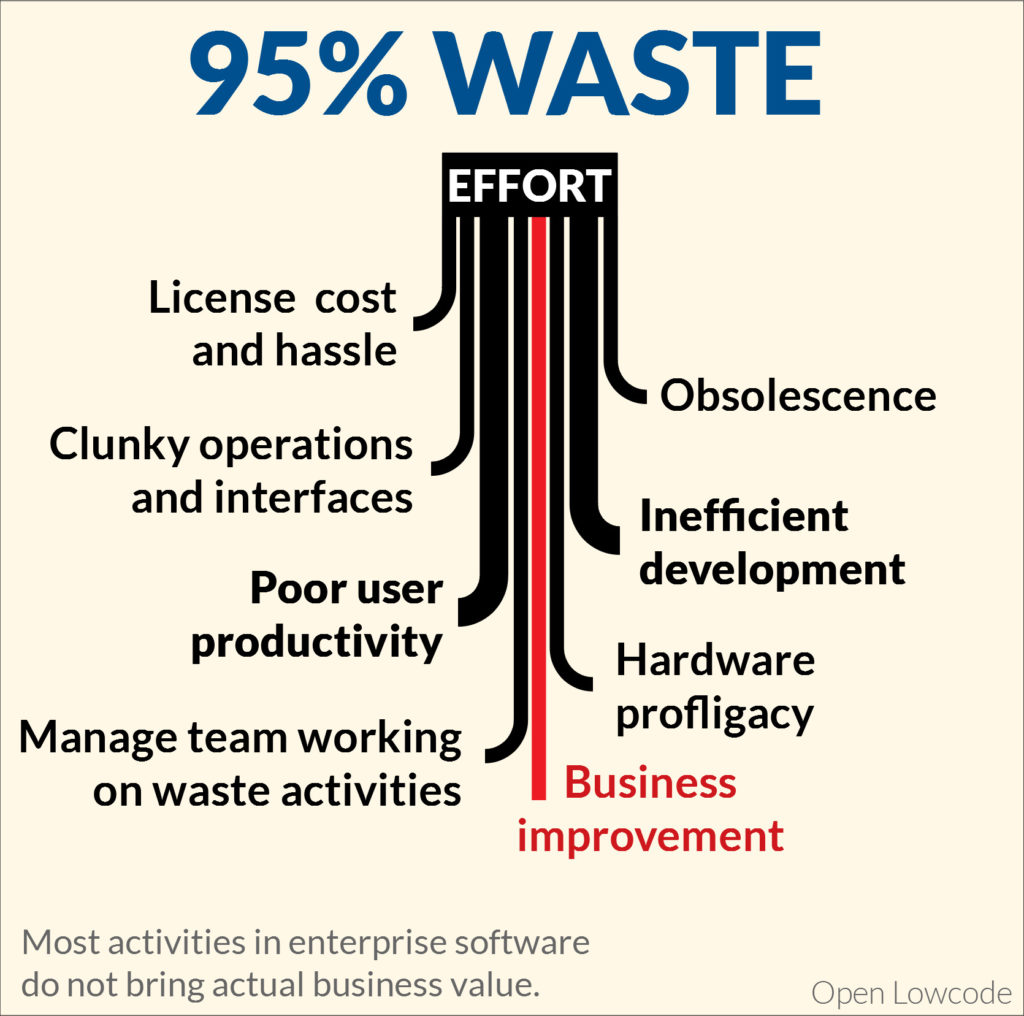

Waste in Enterprise IT

A large part of the processes that run our modern world are very optimized. When you are confronted to them, you will be hard pressed to propose better ways of doing things. Experts will explain you the reason for any gesture or detail. Firemen and aircraft pilots follow detailed well-thought procedures. Even a well organized restaurant is impressive. This is certainly not the first impression when involved in enterprise IT. Sometimes, you will get the feeling you lost your whole day or week. You will certainly confirm the feeling by looking at the budgets. This is not the result of individual gross incompetence. Many of the enterprise IT actors are motivated and competent professional. They do their best with the chosen strategy and technology.

Licence Cost and Hassle

Sneaky price increases for captive customers

In 2019, if you speak with an executive in a large corporate IT department, the first topic to come up may be licenses. There is anocdetal evidence that many large software vendors have used various tricks to get more money from existing customers. Move from purchasing license to subscription also often results in multiplied costs. Also, you now sometimes need to pay software for development and test environments, in addition to the production environment, whereas it did not use to be the case.

Cost not related to complexity and novelty

It seems natural that the cost of software is related to its complexity, and also to the novelty and innovation inside. You expect that, similarly to what happens in cars, new technologies start in luxury products, and drills down then to basic products. In the case of software, you expect most technologies to end-up as open-source as they become commodity.

Still, this is often not the case. In many occasions, companies end up paying important amount of money for software that just gathers commodity technology, and packages it with a trendy marketing message. This is even more often the case with cloud solutions, where the runtime costs are packaged in a murky way, and everything seems to be rounded up to the nest higher multiple of $10/month/user.

Sometimes, the cost is not related to the software complexity and novelty but to the value the software brings. Granted, the software is expensive, but as it allows important savings, it would seem fair that the software vendor captures a large part of those savings. This is OK at the beginning to allow the software vendor to finance their development with a few early adopters, but it should not last, and it is clearly outrageous if it does. In most transparent markets, competition ensures that price converges on cost plus a normal margin, not value, and the faster the better. Enterprise software is mostly not a transparent market, so outrageous pricing can last for a long time.

Annoying license servers

So you have paid the price, fair or not, for your software. This does not mean you can use it right away. Sometimes, you need to install a license server to share tokens. This is another failure point on your system, and, in my experience, the license server is linked to a large number of incidents visible by users.

Clunky Operations and Interfaces

I am the happy subscriber of an online video-game. The small company maintaining it has millions of customers, and is maintaining a large number of servers to support the online game. As far as I can see, many processes are automatized, and especially, I have an auto-update for the software installed on my PC that runs perfectly. In an enterprise context, all this could be a huge headache.

Although it is really easy to implement, most enterprise software platforms did not implement auto-updates of clients, and as a result, thick clients have a bad reputation. So mediocre web clients have become the norm. On the server side, it is sometimes no more brilliant. Some updates take several days to be deployed on the server. This is the consequence of partially manual procedures, and also of poor performance of the server when updating itself.

Interfacing the system with another system is often a big source of headaches. This may be the result of applications using obscure technologies to allow interface with them. Also, the interfaces provided are very often not robust. So there is a huge work to develop each time error handling and logic that should have been present on the server. There are also cases where not all features of the application are available through interfaces. This is the cause of other unpleasant workarounds.

Poor user productivity

Negative impact of IT on user productivity is the area with the most impact. This is because employee salary for a company is far bigger than the IT budget. The negative impact also extends to subcontractors working with the company tools. Typically, enterprise IT can be responsible for employees wasting 10 or 15% of their time. The cost of this lost time will be by far the biggest waste related to enterprise IT. Bad productivity can be linked to bad ergonomics, poor performance, and workaround.

Bad Ergonomics

Employees lose time because of bad ergonomics. Especially, this is visible when moving from a spreadsheet to a web application. Spreadsheets have extremely convenient ergonomics shortcuts, such as copy / pasting on several cells, formulas, powerful search features. Also, spreadsheets allow final users to record macros. For an action that would be a filter + Drag a value in a spreadhseet, the most common web apps will require users to perform actions one line at a time, sometimes with a wasteful sequence like:

- Search with criteria

- Select One element and open the detailed view page

- Open Modify Page

- Perform Modification

- Re-perform the search and continue with next line

The sequence below, repeated over, say, 50 objects will mean 250 actions for what, in a spreadsheet, is done in 2 actions: filter and drag new value.

Performance

Poor performance that some applications have makes bad ergonomics even worse. There are two levels here:

- pathological performance issue is when some actions take several dozen seconds, let alone several minutes. Very often, this is the result of actions on several objects being performed one by one on the database, or a massive error in algorithmics. It is of course very bad.

- Mediocre performance is when an individual action takes several seconds. Typically, web application developers for enterprise are happy when they reach 3-7 seconds per page display. This is already very slow, and significantly slows the work of employees. In the example below, with 5 seconds response time, the 50 objects action above will take around 20 minutes of boring grind.

Workarounds

Many enterprise applications are not completed. This means that, to perform business actions, various workarounds are necessary. Typically, a “comment” field is filled with cryptic codes to replace missing features. Also, some business actions that follow a defined logic are not implemented as “one step”. To illustrate, as a developer, I often have to close an issue, and create a related one in the issue tracker tool.. The new bug is part of the same project, same package than the original issue, and should be linked to the original issue. I was very frustrated not to have a one click action for that, although I was using a major tool from the market.

Obsolescence

Replacing an old specific application

You probably know the scenario: you have an old application that has been developed in the 1970s. It has old ‘text terminal’ ergonomics. However, it runs smoothlessly, and very often, it does not cost a lot. You could also have a web application from the early 2000 that is not very beautiful but fast. At some point, you will replace those applications. You may be forced to if you cannot procure the hardware, upgrade the software. Sometimes, companies even choose to replace an old system although they could have run it longer.

Such legacy retirement projects are always much more complex than planned. The end result is a worse and more costly application. The first big mistake is always to underestimate the complexity. Younger people working on the legacy retirement project will always think the complexity of the old system is due to dumb choices in the past. In reality, most of the features of the old software have good reasons to be there. The previous generation was as smart as we are, and, sometimes, they even had to be smarter developing IT systems 30 years ago. If you replace your old legacy by specific development, you find quickly missing features during tests , and you will be able to implement it. The overcost will be reasonnable, say 15 to 30%. If you replace your old legacy by a packaged software, it may not be possible at all to add those new features, or the overcost may be significant. You could look at doubling the cost of the project or more.

Upgrading software packages

You may have a software package in place. Typically, you deployed it in the last decade. The typical support time of a software package version may be close to 3 years. So you may already have done 3 or 4 upgrades. Actually, that mean you may only keep a software in production 2 years or less. You need one year to perform the upgrade project. As you cannot afford to perfom an upgrade every 18 months, the reality is a mix of keeping software unsupported for some time, and still performing upgrade projects every 3 years or so. Those upgrade projects are variously difficult depending on the level of customization, and the disruptions brought by software packages.

Inefficient development

I have seen the situation dozens of times in my career: a business expert requests for a feature that can be summarized in 3 sentences. This feature is not even one of the famous “just figure it out” that is more complex than it looks. Still, it will take days of effort to develop it. Everytime, the business expert will be shocked by the final price, leading to jokes like ‘every word you say will cost 2000€‘ Sometimes, this is because you are talking to a junior development team, but not always.

Packaged software

To extend features offered by packaged software, you need some APIs (interface) to

- add the function in the pages of the application

- perform the necessary actions on the business objects

Both interfaces may be non-existent, or incredibly inconvenient and cumbersome. Thus, most of the energy may be spent figuring out how to add the extension to the software package. In the best case, there is an inconvenient way to do this. Very often, it will not have the performance required and this will cause issues later. In the worst case, you may end-up decompiling and modifying the package executable to add the extension. I do not blame the developers who do this as they have no choice. The package editor is guilty by not providing sufficient APIs. However, when an upgrade comes, this situation turns out as the worst possible.

Infrastructure in specific development

Specific development allows you to develop any logic you need. However, you may need to implement basic functions everytime, such as security, transaction management, auditing, and page layouts. In the best case, your local development team has implemented some common fonctions to do this. However, those functions are very likely to be limited and incomplete. Indeed, some functions are hard to get right. Also, the project managers will not authorize the technical team to spend time on those infrastructure features. They are perceived as not bringing values. In the end, a developer may spend 5% of his time implementing the 10 lines of code for the requested business logic and 95% of his time implementing infrastructure features not linked to the specific request

Difficulty of testing

Development is 50% writing a feature, and 50% testing and correcting it. You will perform 5 to 10 modifications before your development is stable enough to go to the next stage. To test a feature, you need to deploy your development on a test environmment. Ideally, you can test on your PC, and deployment is as fast as moving a file. However, in the worst case, you need to deploy your development on a server. It may take 30 minutes just to launch the environment to perform your test. Sometimes, it may need even more, as you need to recreate data specific to your problem.

Hardware Profligacy

The famous Moore’s law has predicted that electronics double its processing power every 18 months. As a result, the mindset in IT has been, mostly, not to care about performance. However wasteful the programs were, electronics would always save us. To be honest, it is unavoidable that some of the electronics progress is invested in the developer productivity. Nobody would develop complex programs in assembly language anymore. However, in enterprise software, and, for this matter, also, consumer internet, this trade of performance for ease of development has not always been smart. It is very common that there are alternatives or potential alternatives that would offer almost the same ease of development with using 10 to 100 times less hardware processing power. My favourite example is the use of interpreted languages such as PHP or javascript. Those languages are not much more convenient than compiled languages like C++ or java, and the compiled languages will be much faster, often 10 times faster or more. To make it short, with good technology, you divide your cloud bills by a factor of 10.

Manage teams working on waste activities

You need to manage those waste activties, and this coumpounds the cost. You can have extraordinary productivity with a few people, let’s say 5 to 7, working togethe. However, when you are more than 15-20, you will need to add a lot of structure and management layer, and communication will be far worse. I would estimate the loss is certainly close to an additional 30% or 50% overhead, sometimes even more. So, in a typical scenario, instead of being 3 times less efficient due to bad technology and organization, you may end up being 5 times more inefficient.

Solutions

There are many other inefficiencies we did not discuss here. natural human conflicts exist in any big organization. Many issues originate in the lack of training and adequate skillset. Certainly, all those also have an impact. However, the big strategic mistakes always seem related to a bad choice of technology and approach. There are however solutions to this sorry state. Cloud based packaged software is certainly an answer in some cases. Vertical integration ensures some efficiency, and provided the packaged software really answers to the needs, and providers can be switched easily, it is certainly an appopriate solution. For other cases, the winning strategy may be a good low-code platform. Open Lowcode provides an open-source platform, so there is no license cost. It is based on common technology, so there is almost no obsolescence issue. And while we are at the beginning still, the priority focus of Open Lowcode is certainly ease of development, robustness and user ergonomics.

Leave a Reply

Want to join the discussion?Feel free to contribute!